Key Takeaways

| Topic | What You Need to Know |

|---|---|

| Data Collection | Cloud AI writing tools process everything you type on remote servers — including passwords, client info, and private messages |

| Scale of Risk | 40% of organizations have already experienced an AI-related privacy incident (Protecto, 2025) |

| GDPR Exposure | Cumulative GDPR fines reached €5.88 billion by January 2025 — cloud AI tools are a major source of violations |

| User Trust | 78% of consumers refuse to use cloud AI features when they understand the privacy implications |

| The Alternative | On-device AI processing keeps your data on your device — no server uploads, no third-party access |

| Best Option | CleverType processes AI suggestions locally, meaning your writing never leaves your phone |

Consequently, Every time you use a cloud-based AI writing tool, your words travel to a remote server, get processed by a third-party system, and — depending on the tool — may never truly be deleted. A 2025 report by Protecto found that 15% of employees have already pasted confidential information into public AI chatbots without really thinking about what that means. Nonetheless, That's Additionally, one in seven people at your company, potentially exposing client data, business strategies, or personal details to systems they don't control. So why does everyone keep using these tools? Mostly because they have no idea there's a better option.

What Cloud AI Writing Tools Actually Do With Your Data

Moreover, Cloud-based AI writing tools send your text to remote servers for processing — and what happens to that data afterward is rarely as transparent as these companies want you to think.

Most people assume AI writing assistance happens on their device. It doesn't. Therefore, Tools like Grammarly, ChatGPT-based writing assistants, and similar cloud products require your text to make a round trip: it leaves your device, travels to a server farm, gets processed by large language models, and comes back as a suggestion. That entire journey happens in milliseconds, which is why it feels instant.

Nonetheless, The problem isn't the speed. Furthermore, It's what happens during and after that journey.

Grammarly's own privacy policy confirms that user data is shared with third-party service providers including Amazon Web Services. The company uses aggregated data to improve its AI models. That's a polite way of saying your writing contributes to training data. When you type a draft email about a client negotiation or a personal health issue, those words potentially feed back into a system millions of other people use. Not great.

Here's what cloud AI tools typically collect:

- Everything you type — this is unavoidable for cloud processing to work

- Usage metadata — which features you use, when, and for how long

- Device and browser information — including IP address and location

- Correction patterns — what you changed and what you accepted

- Account-linked data — especially if you're signed into a paid tier

The Stanford Foundation Model Transparency Index tracks how open AI companies are about their data practices. Additionally, Scores dropped from 58/100 in 2024 to just 40/100 in 2025 — meaning these tools are getting less transparent over time, not more. Therefore, And that drop happened right as adoption hit record highs. Nonetheless, Not a great sign.

Moreover, For personal use, it's uncomfortable. Consequently, For professionals handling sensitive information — lawyers, doctors, HR teams, executives — it's a real legal and ethical liability.

The Real Privacy Risks: What the Data Actually Shows

Hence, The privacy risk from cloud AI writing tools isn't theoretical — 40% of organizations have already experienced an AI-related privacy incident.

Consequently, Let's put some actual numbers on this. Additionally, According to Protecto's 2025 AI Data Privacy Statistics:

- 40% of organizations report experiencing an AI-related privacy incident

- 15% of employees have pasted sensitive data into public AI chatbots

- 70% of adults don't trust companies to use AI responsibly

- 81% of adults expect companies to misuse their personal data

Moreover, And the financial stakes? Additionally, IBM's Cost of a Data Breach report puts the average breach cost for professional services firms at $5.08 million. A single incident — one employee pasting confidential contract terms into a cloud AI tool — can trigger that exposure.

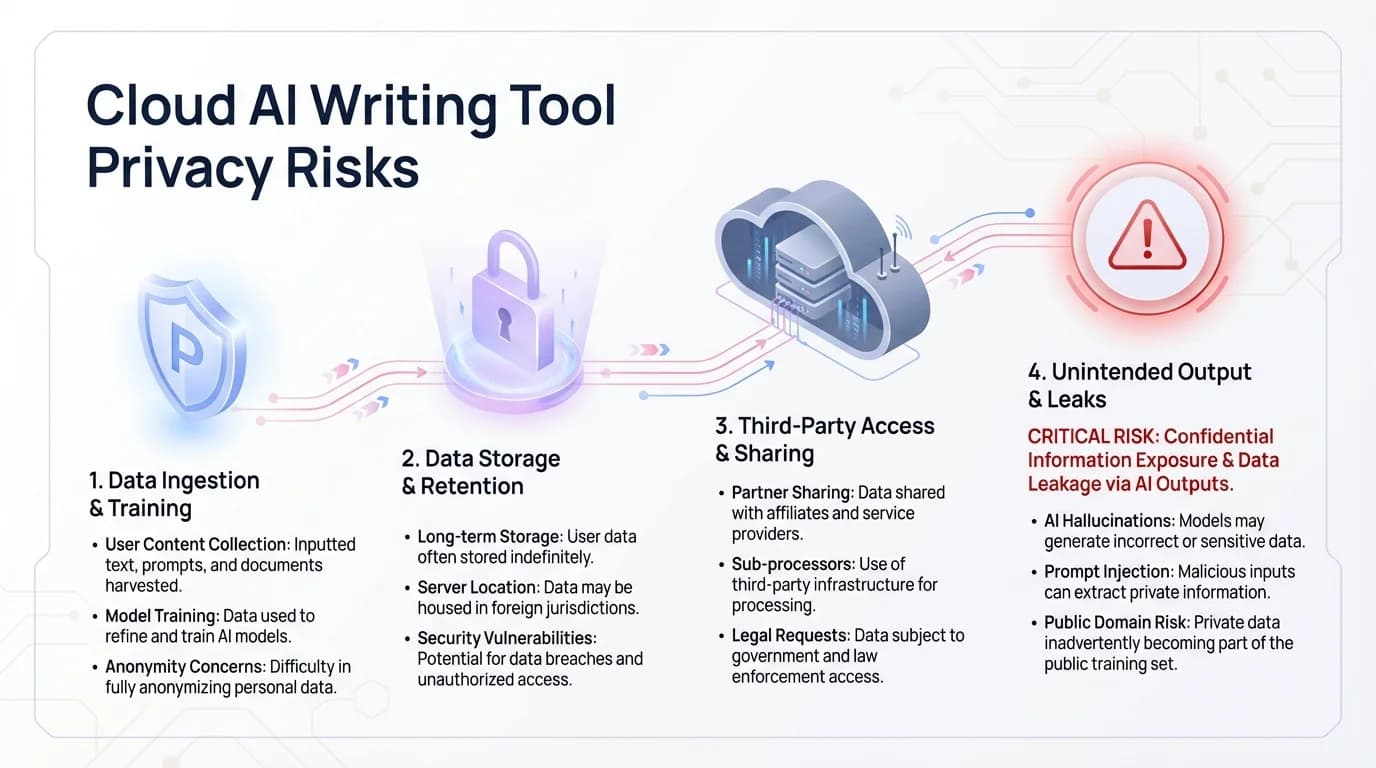

Additionally, But it's not just about data retention. There are specific ways these tools create risk:

1. Prompt Injection Attacks

Hence, Malicious content in a document you paste can actually tell the AI to extract and transmit sensitive context from your session. Therefore, It sounds technical but it's a pretty straightforward exploit.

2. Data Retention Policies

Most cloud AI tools hold onto your inputs for varying periods. Some use them for model training indefinitely unless you manually opt out — and even then, data that's already been processed probably isn't getting fully deleted.

3. Third-Party Subprocessor Chains

Consequently, When a writing tool outsources to AWS, Google Cloud, or Azure, your data passes through multiple privacy agreements. Additionally, One weak link anywhere in that chain exposes everything.

4. Shadow AI

Employees use AI tools without IT approval all the time. IBM research found that 35% of data breaches now involve "shadow data" — stuff shared with systems outside formal IT oversight. Cloud writing tools are a major driver of this.

5. Cross-Context Contamination

Hence, Use the same cloud AI account for personal and work writing? The tool builds a profile connecting both contexts. Consequently, That profile lives on servers you don't control and probably won't ever see.

Nonetheless, The Incogni 2025 AI Privacy Ranking named Meta AI the worst offender — most extensive data collection, most third-party sharing. ChatGPT and Grok landed somewhere in the middle. The common thread across all of them: cloud processing means your data is their data, at least for a while.

Key privacy risk statistics from cloud-based AI writing tools — data exposure, breach incidents, and the hidden threats of prompt injection and shadow AI

GDPR and Legal Compliance: Why Cloud AI Creates Serious Headaches

Using cloud AI writing tools with personal or client data likely violates GDPR — and regulators are actively enforcing this.

Consequently, The EU's General Data Protection Regulation doesn't care how fast the AI processes your text. Hence, It cares about consent, purpose limitation, and data minimization. Furthermore, Cloud AI writing tools fail on all three — regularly.

Purpose Limitation (Article 5(1)(b))

Say you use a writing tool to draft a client email, and the tool quietly trains its model on that text. That's using data beyond what it was collected for. Your client never consented to having their info feed an AI model — and neither, probably, did you.

Special Category Data (Article 9)

Nevertheless, Writing tools can end up processing health information, political views, or religious content buried in everyday text — all of which require explicit consent under GDPR. An HR person drafting a medical leave policy, a journalist covering politics — both are sending special category data to cloud systems just by doing their jobs.

Right to Erasure (Article 17)

Users technically have the right to have their data deleted from AI training sets. In practice? Nevertheless, Once data's been baked into model weights, removing it is nearly impossible. Therefore, Most companies won't even try.

Data Minimization

Moreover, Cloud tools collect a lot more than they need to give you writing suggestions. Your location, device fingerprint, usage patterns — none of that is necessary to fix a comma splice.

Moreover, The enforcement numbers tell you everything you need to know. GDPR fines hit €5.88 billion cumulatively by January 2025, with €1.2 billion handed out in 2024 alone. Nevertheless, The EU AI Act adds another layer — full enforcement starts August 2026, with penalties up to €35 million or 7% of global turnover for non-compliant AI systems.

Moreover, For lawyers, it gets even more pointed. Additionally, ABA Formal Opinion 512 (July 2024) says that lawyers using AI tools need to understand whether systems are self-learning and whether confidential client information is transmitted to external databases. Hence, Standard engagement letter boilerplate isn't enough. Moreover, Only 10% of law firms have actual AI usage policies, despite 79% of lawyers using AI tools in practice.

The math is simple: data that never leaves your device can't trigger a GDPR violation. On-device AI isn't just a privacy preference — for a lot of professionals, it's the only option that actually holds up legally.

How On-Device AI Processing Changes Everything

On-device AI processing means your text never leaves your phone — no server upload, no third-party access, no data breach risk from cloud infrastructure.

This isn't some futuristic concept — modern phones already have dedicated AI chips capable of running serious language models locally. The question isn't whether on-device AI works. Nevertheless, It does. The question is whether the tools you're using have bothered to build it that way.

Hence, The privacy wins are real and measurable:

| Protection | Cloud AI | On-Device AI |

|---|---|---|

| Data transmission | Every keystroke sent to server | Nothing leaves device |

| Third-party access | Multiple subprocessors | Zero |

| Breach exposure | Full cloud attack surface | Eliminated |

| GDPR complexity | High — multiple obligations | Minimal |

| Offline capability | Requires internet | Works anywhere |

| Audit trail | Controlled by provider | Fully local |

Hence, Research from Tech Research Online found that 78% of consumers refuse cloud AI features once they actually understand the privacy implications. And 91% said they'd pay more for on-device processing to protect their privacy. The demand is absolutely there — the products just haven't caught up.

And here's the thing about adoption: the same research found on-device AI products see 3x higher feature adoption rates than cloud equivalents. Additionally, Entirely driven by trust. People actually use features they trust. Hence, They quietly avoid the ones that feel like surveillance.

Apple's approach is worth looking at. Their Private Cloud Compute (PCC) architecture handles as much as possible on-device, and when cloud processing is genuinely needed, it uses hardened servers that even Apple's own employees can't access. Therefore, That's the bar people are starting to expect.

For writing, on-device AI handles grammar correction, tone adjustment, smart replies, and context-aware suggestions — all without any data leaving your phone. The latency is comparable to cloud, often better, because there's Moreover, no network round trip involved.

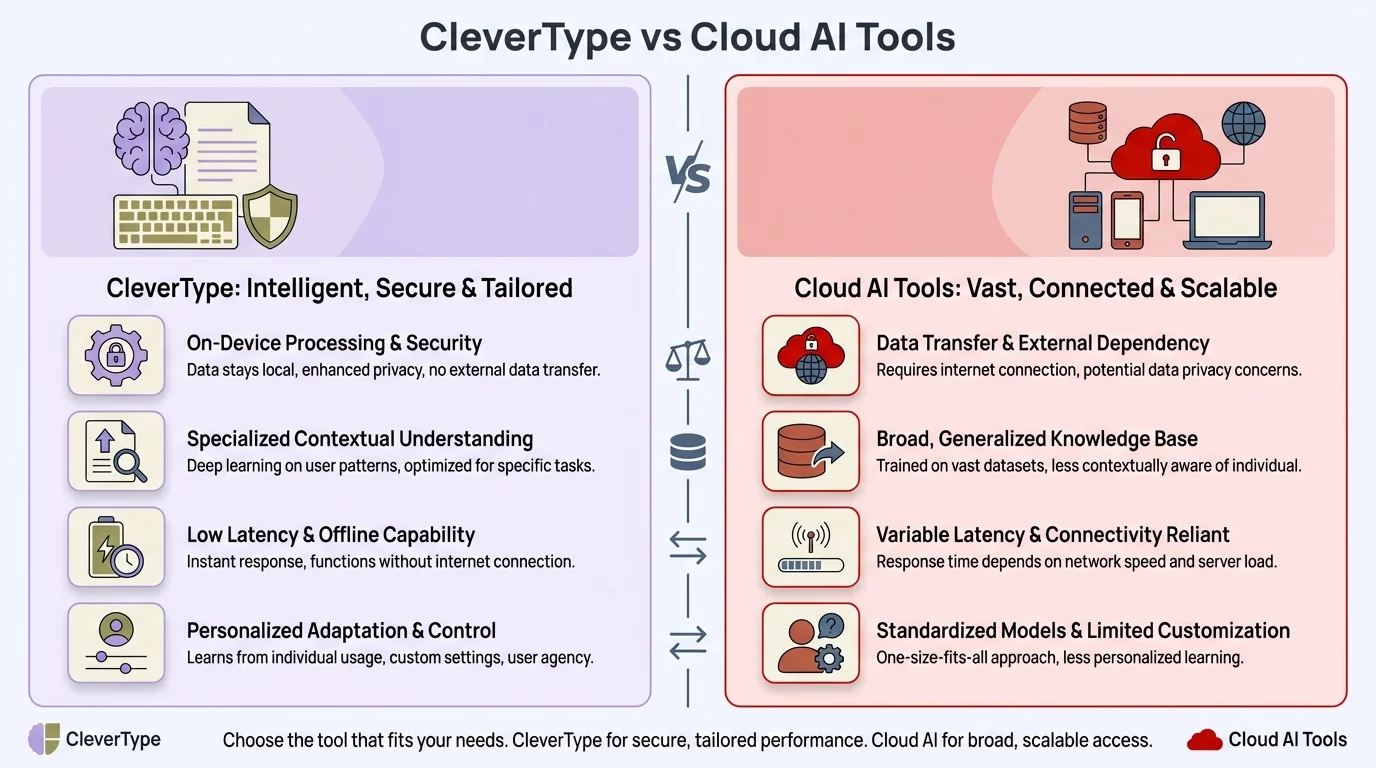

Nevertheless, CleverType does exactly this. The AI keyboard runs its core processing on-device, so your grammar corrections, smart suggestions, and writing assistance happen without your words ever touching an external server. Unlike Grammarly — which routes everything through AWS — or ChatGPT writing integrations, CleverType's whole design starts from the assumption that your data should stay with you.

What Happens When Things Go Wrong: Real Incidents and Consequences

Furthermore, Cloud AI writing tools have already caused real-world privacy incidents — from legal sanctions to corporate data exposure.

Moreover, The risks aren't hypothetical anymore. Here's what it looks like in practice:

The Mata v. Avianca Case (2023)

Lawyers submitted case citations generated by ChatGPT — citations that turned out not to exist. They faced serious court sanctions. Furthermore, The deeper issue: cloud AI tools present outputs with false confidence, and professionals using them have zero visibility into what data shaped those outputs.

Samsung's Internal Data Leak

Consequently, Samsung engineers accidentally leaked proprietary semiconductor data by pasting internal documents into ChatGPT for assistance. The data was processed on OpenAI's servers, potentially becoming part of training datasets. Consequently, Samsung banned ChatGPT internally after that.

Healthcare Sector Exposure

275 million health records were exposed in the US healthcare sector during 2024, largely through cloud-based tool vulnerabilities. Doctors and nurses using cloud AI writing tools to draft patient notes created direct HIPAA exposure. Not through hacking — just through normal everyday use.

The Shadow AI Problem

Nevertheless, IBM research found that 57% of organizations can't track, control, or report on content shared with external AI services. Moreover, Employees use cloud writing tools on personal devices, through browser extensions, paste company data without IT knowing anything about it. Traditional security perimeters were never built for this.

What makes all of this especially bad is that you usually can't undo it. Nevertheless, Once your text has been processed and potentially folded into training data, there's no practical way to take it back. Therefore, GDPR's "right to erasure" exists on paper; actually removing specific text from model weights is technically near-impossible.

IBM puts the average time to identify and contain a breach involving stolen credentials at 328 days. Nearly a year of exposure before anyone even knows there's a problem.

For individuals, consequences range from embarrassment to identity theft. For organizations, you're looking at regulatory fines, damaged client relationships, and the cost of breach response. Additionally, Gartner estimates 99% of cloud security incidents come down to human error or misconfiguration — and using cloud AI writing tools without proper governance is exactly what that means in practice.

Enterprise AI Privacy: The Stakes Are Much Higher

Moreover, For enterprise teams, the privacy risks of cloud AI writing tools multiply with every employee using them — and most organizations don't have adequate controls in place.

Only 18% of organizations have actually implemented AI governance frameworks, despite 90% using AI in daily work. That gap — roughly 72% of enterprises running AI tools with basically no oversight — is where the real exposure sits.

Competitive Intelligence

Executives drafting strategy docs, sales teams writing proposals, engineers documenting architecture — all of that passes through cloud AI servers. Competitors and regulators can't directly access that data under normal circumstances, but the attack surface is real and it grows with every person on your team using these tools.

Client Confidentiality

Hence, Law firms, accounting firms, consultancies — they handle client information with specific confidentiality obligations attached. Additionally, Every time that client data gets processed on an external server through a cloud AI writing tool, you're in breach of those obligations. That's not a hypothetical; that's just how it works.

Regulatory Sector Requirements

Financial services firms are now under EU DORA (effective January 2025), with specific operational resilience requirements. Nevertheless, Healthcare runs under HIPAA. Therefore, Both conflict directly with how most cloud AI writing tools handle data.

Data Residency

Most cloud AI tools process data in US data centers by default. For EU organizations, that creates cross-border transfer issues that technically require Standard Contractual Clauses — which nobody's thinking about when they copy-paste text into a writing assistant at 9am.

CleverType's on-device approach scales. When everyone on a team uses an AI writing tool that processes locally, the organization's overall exposure drops dramatically. No centralized breach point. No bulk data sitting with third parties. Nonetheless, No compliance headache from everyday writing assistance.

Nevertheless, When evaluating any AI writing tool, the first question should be: "Where does this process my data?" If the answer is "our servers" or "cloud infrastructure," that's a red flag. It means vendor assessments, DPIAs, probably legal review — before anyone on your team touches it.

CleverType vs Cloud AI writing tools: on-device processing eliminates the privacy risks and compliance headaches that come with cloud-based alternatives

How to Spot a Privacy-First AI Writing Tool

A genuinely private writing tool processes your data on-device, has a clear privacy policy, and doesn't need cloud connectivity to function.

Not every tool that claims to be "private" actually is. Moreover, Here's a quick checklist for evaluating any AI writing tool before you trust it with sensitive text:

Questions to Ask Before Using Any AI Writing Tool

- Where does processing happen? — Does it work offline? If yes, on-device processing is likely. If it requires constant internet, it's cloud-based.

- What does the privacy policy say about training data? — Look for specific language about whether your inputs are used to improve the model. Vague statements about "improving your experience" usually mean yes.

- Who are the subprocessors? — A legitimate privacy-focused tool will list exactly which third parties, if any, handle your data. Long lists of subprocessors are a warning sign.

- Is there a zero-data-retention option? — For enterprise use specifically, look for tools that offer contractual guarantees that data isn't retained beyond the immediate session.

- Has it been independently audited? — SOC 2 Type 2 certification is a baseline standard for data security. Look for it.

- Does it work in airplane mode? — This is the simplest privacy test. Open the tool, turn off WiFi and mobile data, and try to use it. If it fails completely, your data needs their servers.

Red Flags in Privacy Policies

- "We may use your data to improve our products" — means training on your inputs

- "We share data with trusted partners" — means third-party access

- "Data may be retained for up to [X] months" — means your inputs aren't immediately deleted

- No mention of on-device processing anywhere in the documentation

Green Flags

- Explicit "on-device processing" language

- Works without internet connectivity

- Minimal subprocessor list (ideally none)

- Clear data deletion procedures

- Independent security certifications

Nevertheless, CleverType checks all of these. The AI keyboard runs core processing locally, doesn't need cloud connectivity for its primary features, and was built as a private AI tool from day one — not a cloud tool with privacy claims stapled on afterward. Furthermore, Unlike Gboard (which sends your typing data to Google) or SwiftKey (which routes through Microsoft), CleverType starts from the assumption that your words belong to you.

CleverType: AI Writing Assistance Without the Privacy Trade-Off

Moreover, CleverType is an on-device AI keyboard that offers grammar fixing, tone adjustment, smart replies, and AI suggestions — without sending your text to external servers.

The good news is there's a direct solution, and you don't have to sacrifice functionality to get there. Hence, CleverType packs the same core features as cloud competitors into an on-device architecture that keeps your data private by design.

| Feature | Cloud AI Tools | CleverType |

|---|---|---|

| Grammar & spell check | Cloud-processed | On-device |

| Tone adjustment | Cloud-processed | On-device |

| Smart replies | Cloud-processed | On-device |

| Multilingual support | Cloud-processed | 100+ languages, local |

| Data leaves device | Yes | No |

| Works offline | Usually not | Yes |

| GDPR complexity | High | Minimal |

| Training on your data | Often yes | No |

All the features that actually matter for daily writing are there. Therefore, Grammar correction catches typos and structural issues in real time. The tone change feature shifts your writing from casual to professional without rewriting by hand. Smart replies suggest responses for messages based on context. All on-device.

Nonetheless, For anyone in law, medicine, finance, or consulting — CleverType's on-device approach is basically the only AI writing tool that doesn't create a compliance problem. Moreover, Client details, case summaries, patient notes, financial analyses — all stay on your device. The AI helps. The risk doesn't come with it.

Moreover, CleverType also handles 100+ languages on-device, which matters more than people realize for multilingual teams and international professionals. Additionally, Cloud tools often push non-English text through extra processing layers, creating additional exposure. CleverType does it all locally.

The privacy-first approach extends to customization too. Nevertheless, Your themes, layouts, and personal vocabulary stay on your device. Furthermore, None of your typing habits get shipped off to improve someone else's model. Grammarly built its business on aggregate user writing data. Microsoft SwiftKey syncs yours across devices through their cloud. CleverType just... doesn't Hence, do any of that.

Nonetheless, Download CleverType from the Play Store and see the difference between AI that helps and AI that harvests.

Frequently Asked Questions

Are cloud-based AI writing tools really that risky for everyday use?

Additionally, Honestly, yes — especially if you ever type personal details, work info, or anything sensitive. Even casual use creates a data trail on servers you don't control. Nonetheless, 40% of organizations have already experienced AI-related privacy incidents from exactly this kind of everyday use.

Does Grammarly sell my writing data to third parties?

Grammarly shares your data with third-party providers including Amazon Web Services, and uses aggregated writing data to improve its models. Furthermore, They claim it's anonymized — but independent security researchers have pointed out that anonymization is rarely as complete as companies want you to believe.

What is on-device AI processing and why does it matter for privacy?

On-device AI means the model runs entirely on your phone or computer — no data goes to remote servers. It matters because your text never touches third parties, can't be intercepted in transit, and isn't exposed if the provider's cloud gets breached.

Is CleverType GDPR compliant?

CleverType processes everything on-device, so your writing data never leaves your phone. Nevertheless, That simplifies GDPR compliance considerably — no cross-border data transfers to manage, no third-party subprocessors touching your data, no cloud retention period to worry about.

Can I use an AI writing tool for legal or medical documents without privacy risk?

Using cloud AI tools for legal or medical documents creates real compliance risk under GDPR, HIPAA, and professional ethics rules. On-device tools like CleverType cut that risk entirely — confidential information never leaves your device and never touches an external server.

What's the difference between privacy-focused AI tools and regular AI writing assistants?

Nonetheless, The core difference is where the processing happens. Cloud AI writing tools send your text to remote servers and may keep it. Furthermore, Privacy-focused tools — especially on-device ones — run the AI locally, so your text never transmits anywhere and can't be accessed by third parties.

How can I tell if an AI writing tool is actually processing data on-device?

The simplest test: turn off WiFi and mobile data and see if the tool still works. Hence, If core features function offline, on-device processing is happening. Also look for explicit "on-device" language in the privacy policy, and check whether they list cloud providers like AWS or Google Cloud as subprocessors.

Ready to Type Smarter?

Upgrade your typing with CleverType AI Keyboard. Fix grammar instantly, change your tone, receive smart AI replies, and type confidently while keeping your privacy.

Download CleverType FreeAvailable on Android • 100+ Languages • Privacy-First

Share this article:

Sources

- AI Data Privacy Statistics & Trends 2025 — Protecto

- Gen AI and LLM Privacy Ranking 2025 — Incogni

- AI and Privacy: 2024 to 2025 Global Developments — Cloud Security Alliance

- On-Device AI: The Future of SaaS Privacy & Compliance — Tech Research Online

- Compliance Challenges at the Intersection of AI & GDPR in 2025 — Secure Privacy

- Engineering GDPR Compliance in the Age of Agentic AI — IAPP

- AI Privacy Risks: Protecting Client Data in 2025 — LeanLaw